TikTok has finally simplified removing and reporting harassment comments in its latest update. Going forward, it aims to regulate content as thoroughly as its social network predecessors.

TikTok’s algorithm can rocket both amateur and seasoned creators to viral stardom in an instant, and that throws up obvious red flags when it comes regulating comments and direct messages.

Now, in just its third year, the leading vertical video app is fully aware of the uphill battle it faces to keep its userbase safe from cyberbullying and harassment. As we speak, TikTok is planning a comprehensive strategy to meet the regulatory standards set by the likes of Instagram and YouTube in recent times.

Considering TikTok’s frankly ridiculous engagement numbers – which are only growing – these changes are definitely welcome.

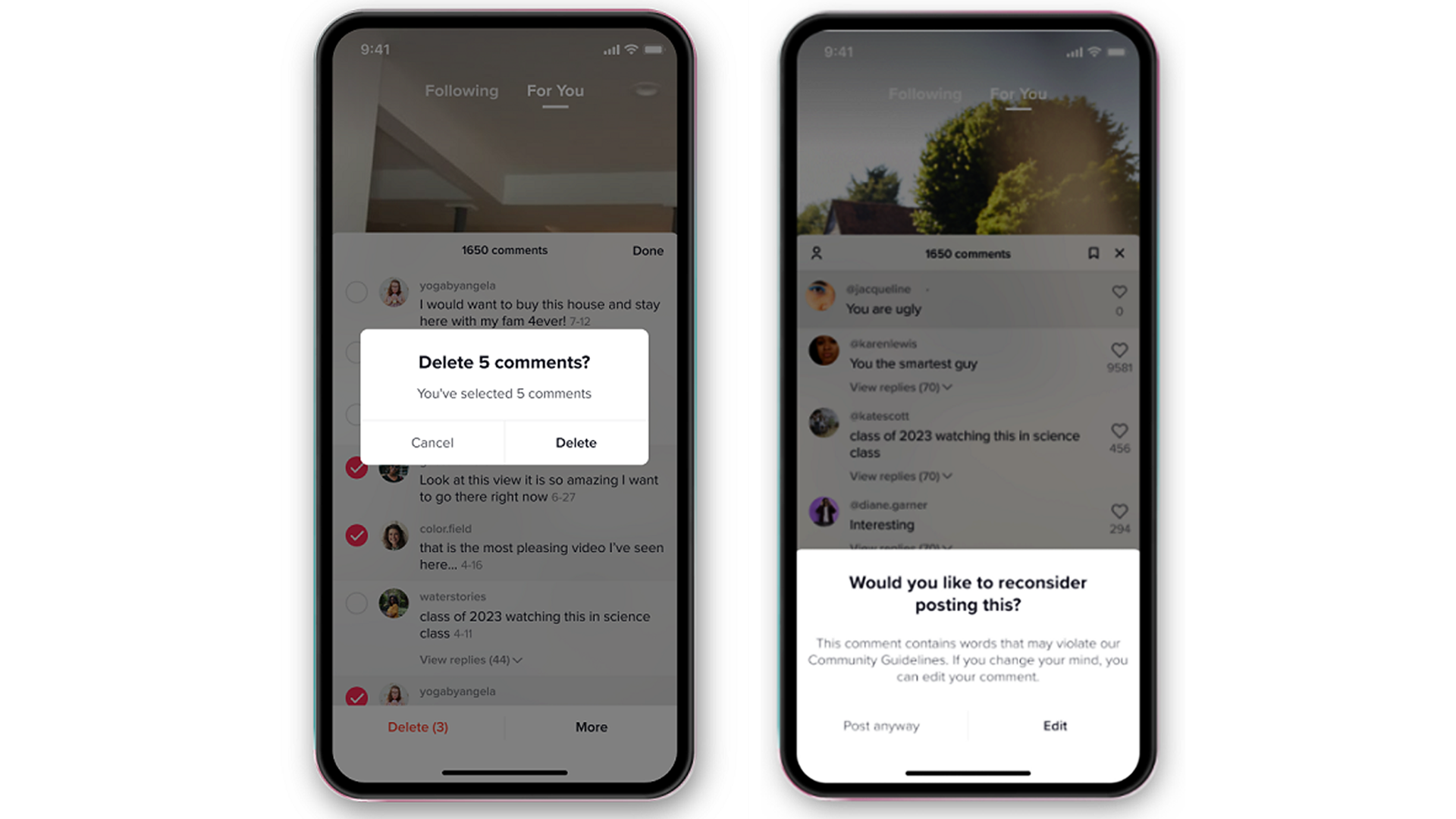

Kick-starting this overhaul is a new update which allows users to select up to 100 comments on their videos at once. From here, they can choose to delete, report, and/or block those they’ve highlighted in a few simple actions.

Intended to root out the damaging impact sifting through hateful and harassing messages can have, especially when removing them one at a time, TikTok believes this user-friendly change will ‘encourage’ creators to become more active in creating a positive in-app experience.

Prior to this change, TikTok introduced an AI prompt system back in March. In lieu of ‘promoting kindness,’ this pop-up system was introduced to deter people from posting comments and messages containing trigger words with potential to cause harm – a direct breach of community guidelines.