Falling in line with the big social networking sites, TikTok is now attempting to tackle its spread of misinformation with in-app warnings and fact checkers.

While TikTok is all about fun and games, its insatiable rise to the top of mainstream culture now demands a more thorough look into the types of content spreading on its platform. Safety first people, safety first.

Chief on the list of concerns admist a global pandemic and political unrest is the spread of misinformation, which TikTok is at last targeting through a slew of future updates.

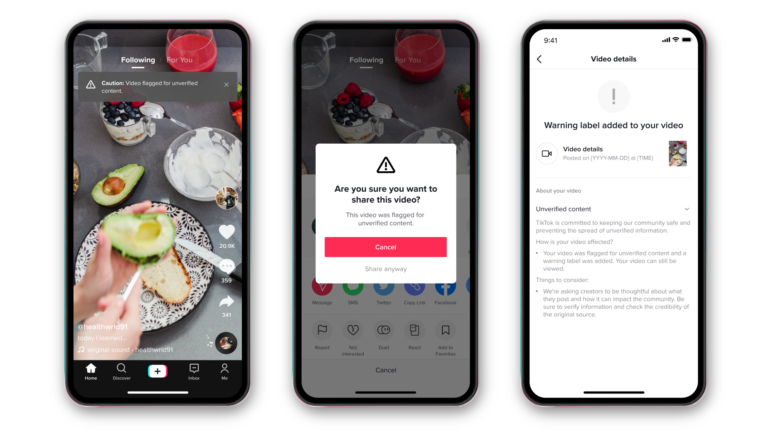

In the coming months, users will start to see display warnings on videos containing information unable to be verified by a dedicated team of fact-checkers. Depending on the video’s overall tone, message, and potential to inflame viewers, TikTok’s algorithm will either instantly limit its distribution or alert mods where it will be taken down completely.

Those relentless enough with their scrolling to find this type of content on their ‘For You’ page will notice it comes with a new warning label, reading, ‘Caution: video flagged for unverified content.’ Any attempt to re-share these videos will prompt an additional message stating that its information is dubious, and offer the up the option to cancel.

Wary of stifling people’s rights to free speech – beyond inciting violence or being intolerant – TikTok will allow flagged videos to be shared, but hopes that these new barriers may dissuade many from following through. In the initial stages, the key topics the platform is paying particular attention to are Covid-19, government elections, and climate change.