New landmark rulings from recent trials might just force social media’s big players into being accountable for child safety. Silicon Valley is reeling.

The past few days have been quite rough for big tech. The companies that have long positioned themselves above the law just had the rug pulled, and things may never be the same for them.

For years, companies like Meta and Google have relied on Section 230 of the Communications Decency Act (1996), which shields them from liability for user-generated content while allowing them to moderate their platforms as they see fit. Big tech has profited from user content and addictive algorithms while largely avoiding growing piles of lawsuits.

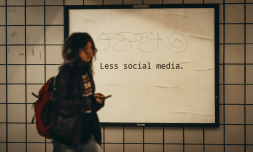

No one has been more affected than the generations born into a digitised world. Though the minimum age for entering social media platforms is 13, nearly 40% of children in the US ages 8 to 12 still own accounts.

With teens spending more hours on average on apps such as TikTok, YouTube, and Instagram, there has been rising concern about their mental health and habits. Yet, laws like Section 230, prevent social media companies from facing the law and taking accountability for the state of play they’ve created.

However, years of apathy towards the situation have, to the surprise of many, have just been upended by a landmark ruling that may force social media companies into a future of compliance.

The seemingly impossible, has happened.

The New Mexico case

In 2023, the state’s Attorney General, Raúl Torrez and his team created fake profiles imitating kids aged 14 and under on Facebook and Instagram. They found that these accounts were quickly flooded with sexual solicitations, explicit images, and even messages from predators almost immediately.

The case concluded that the company knew about the lack of safety regulations of its platforms, and yet engaged in unconscionable trade practices, exploiting the vulnerabilities of children.

Testimony was also given stating that the company’s decision to implement of end-to-end encryption on Messenger blocked law enforcement from accessing crucial evidence needed to prosecute child exploitation. The state also argued that features like ‘infinite scroll’ and ‘autoplay’ on the apps were intentionally designed to keep minors hooked, contributing to mental health crises like addiction.

At the end of the trial, the court came to a momentous decision to fine Meta $375 million, amounting to $5,000 per violation, and you can bet the reparations won’t end there. With this recent shift in momentum, it’ll now be open season on social media tycoons.