As its adversaries integrate autonomous AI systems into their militaries, the US is aiming to secure equal if not greater strategic leverage. However, doing so may come at the expense of ethical frameworks that AI companies are built on.

While digital tech has long been the backbone of global militaries, we have now entered the Agentic Era, where digitalization is finally giving rise to AI autonomy.

Throughout 2024 and 2025, it seemed like the US was falling behind on such progression where China was already using LLMs to supercharge it cyberattacks, and Russia was testing AI-driven drones in its war against Ukraine.

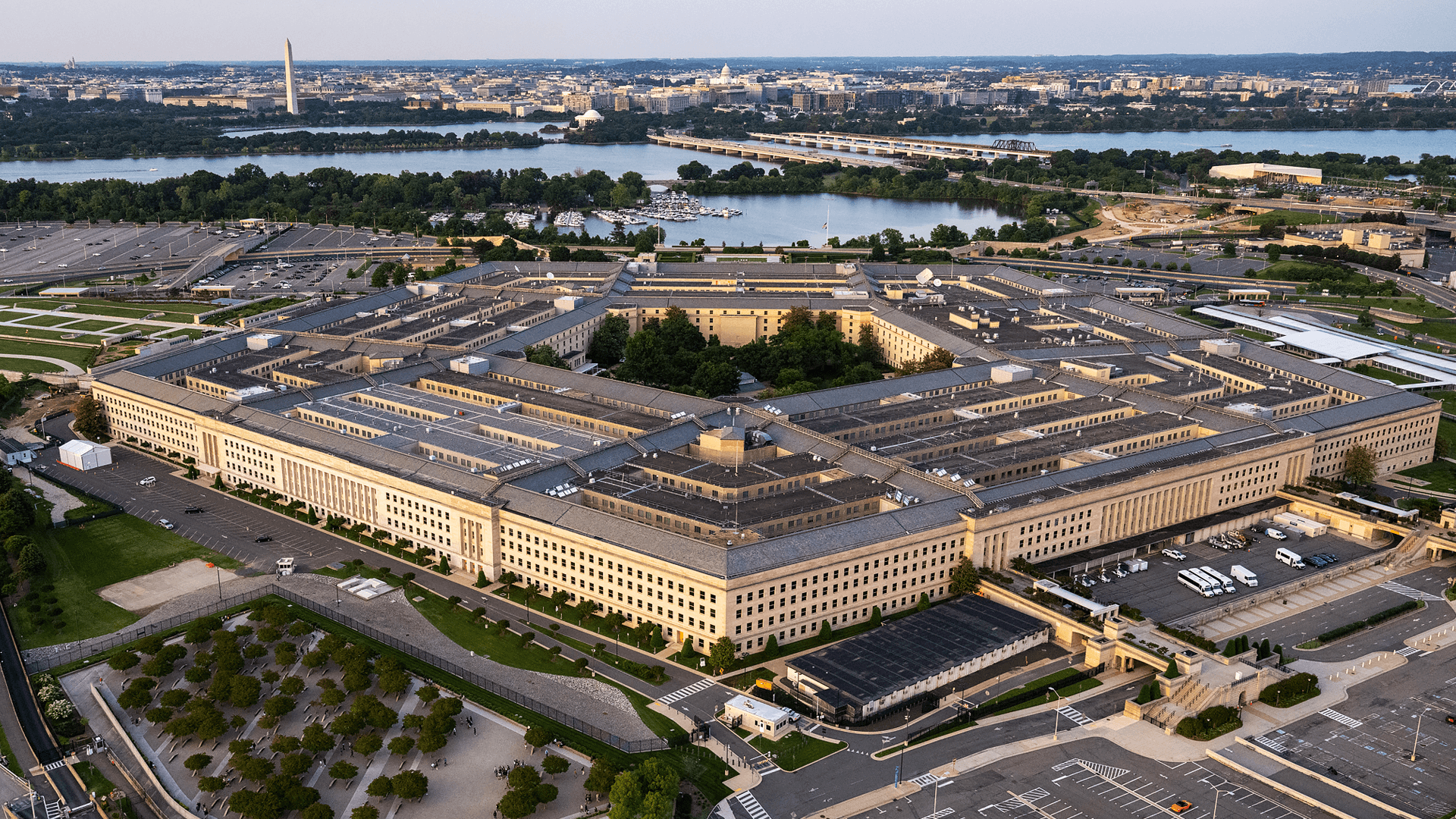

This gap acted as a catalyst that drove four contracts worth $200 million each between four of the biggest AI companies and the Pentagon last July. These companies, OpenAI, Google, Anthropic and xAI were tasked with bringing the US out of its AI chatbot phase, and to push it towards weaponizing such frontier models to stay ahead in global military rankings.

Under these landmark deals, frontier models like GPT-4o, Gemini and Grok have been embedded into GenAI.mil, a secure and classified ecosystem designed for high-stakes operations. In doing so, the Pentagon’s core objective was made clear, which was to weaponize speed.

Rather than relying on human capacities, the Department of Defense (DoD) seeks to delegate and accelerate data-intensive analysis through AI systems where humans are prone to error. Ultimately, by speeding up this process and reducing the margin of error, the time required to identify and neutralize threats is dramatically cut.

These original contracts were structured to be more collaborative and less authoritative. Specifically, the Pentagon and the AI companies all agreed to work together to find responsible ways of using AI for national security.

The DoD had accepted the safety guidelines and red tape that each of these companies had regarding the usage of their AI models. Some of these stipulations were to ensure no fully autonomous lethal force and no mass domestic surveillance, meaning the model would always have humans accountable for its actions.

However, the honeymoon phase that came with the July contracts ended abruptly in January 2026 after the capture of Venezuelan leader Nicolas Maduro. The arrest of such a high-profile individual, let alone on Trump’s orders, made global headlines.

But that wasn’t all that shocked the world, for it was later leaked that Anthropic’s Claude AI had a hand in assisting the mission’s tactical planning. Emphasizing on the clear violation of the previously set AI military usage parameters, Anthropic was understandably horrified.

Following this, the company’s CEO Dario Amodei and Defense Secretary Pete Hegseth had a meeting where Anthropic was given an ultimatum to sign a new contract clause: for the company to allow the military to use Clause for ‘any lawful purpose’.

Yet Amodei stood his ground and released a blog post 24 hours before the offer’s expiry where he emphasized that his company could not in ‘good conscience accede’ to Hegseth’s demands. He further argued that AI has not reached a threshold where its usage can justify autonomous killing and domestic surveillance.