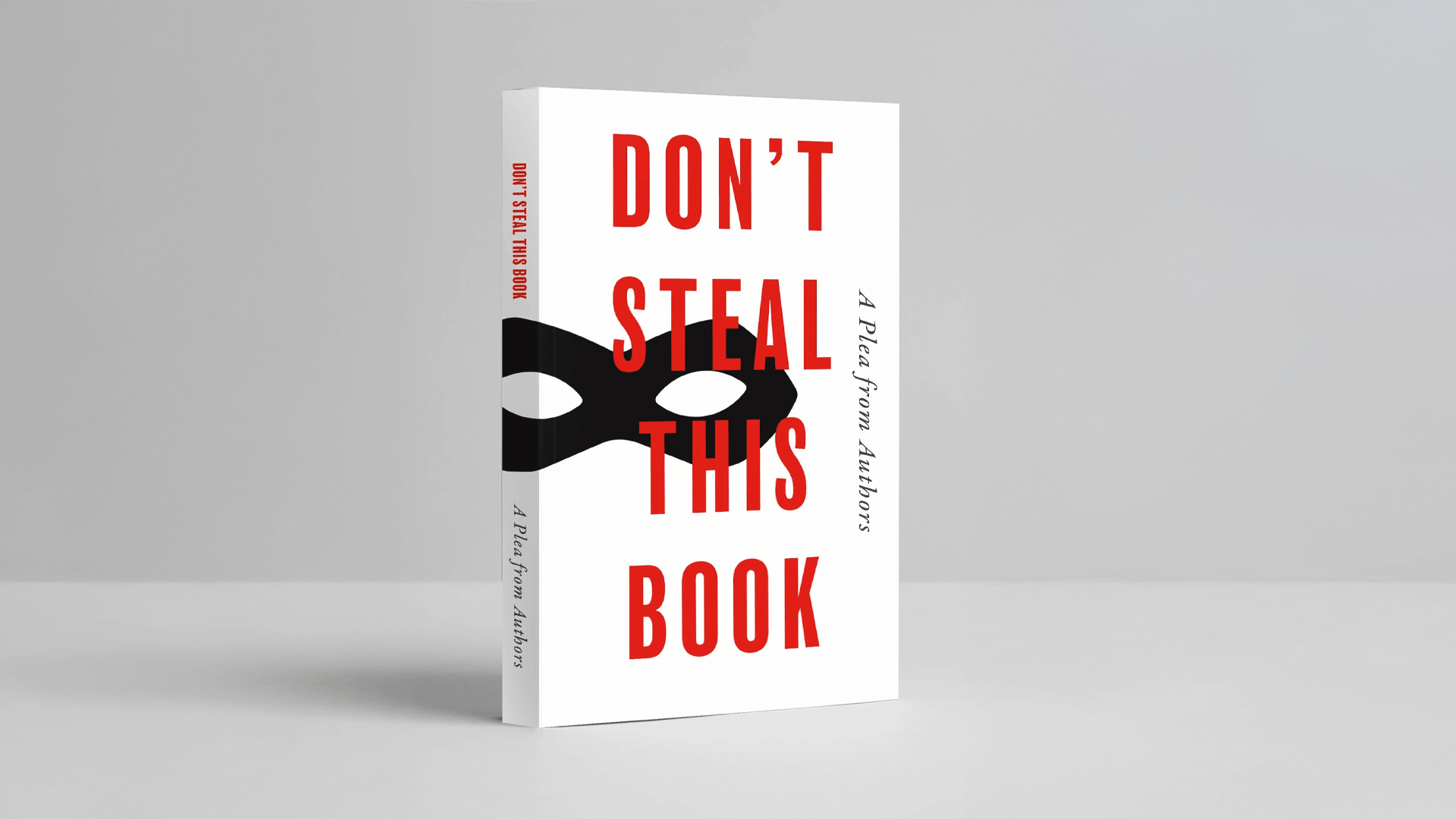

Over 10,000 writers have contributed to ‘Don’t Steal This Book,’ an empty publication that only contains a list of their names. Organised by Ed Newton-Rex, the book will be given out for free at the London Book Fair in protest of AI tools stealing the intellectual property of creatives.

Thousands of writers have added their names to ‘Don’t Steal This Book,’ an ‘empty’ publication that has been printed to protest the unethical use of AI in creative spaces.

The book will be handed out to visitors at the London Book Fair for free this week. It comes only days before the government assesses the economic cost of new alterations to copyright law that may grant tech companies a ‘commercial research exception,’ among other potential changes. This would mean that tech firms would not need to ask permission to use creative works in their AI models.

On its back cover, the book reads: ‘The UK government must not legalise book theft to benefit AI companies.’ It is a direct response to these proposed law changes, as creatives grow increasingly worried about artistic ownership.

View this post on Instagram

‘Don’t Steal This Book’ was organised by Ed Newton-Rex, a composer who is campaigning to protect the copyright of artists and authors. He spoke to The Guardian about the framework underlying most AI generative tools, explaining that they’re ‘built on stolen work’ and lack any ‘permission or payment’ from the original, human creator.

‘It is not in any way unreasonable to expect AI companies to pay for the use of authors’ books,’ added Malorie Blackman, writer of Noughts and Crosses.

Tools like ChatGPT, MidJourney, and Sora create images and text via user-inputted prompts. These can be churned out instantaneously and have been widely adopted by many organisations over the past few years, including some of the biggest brands such as Coca-Cola and McDonald’s.

View this post on Instagram

While they may be convenient for users and companies, the artwork or text that is created is inherently derivative and built on the originality of human artists. AI, by definition, can only ‘create’ based on a network of references and source material.

The technology has expanded so rapidly over the past few years that copyright laws have not been well-established, leading to intellectual theft and lawsuits in both the UK and the US. The term ‘AI slop’ is often thrown around online, as users post automated images of famous intellectual properties all over social media.

There is concern about the future rights of creatives, as AI essentially gobbles up their ideas and replicates them without quality control or consent from those it is stealing from. The UK government hasn’t settled on any one system yet, leaving room for potential problems and copyright issues further down the line.

View this post on Instagram