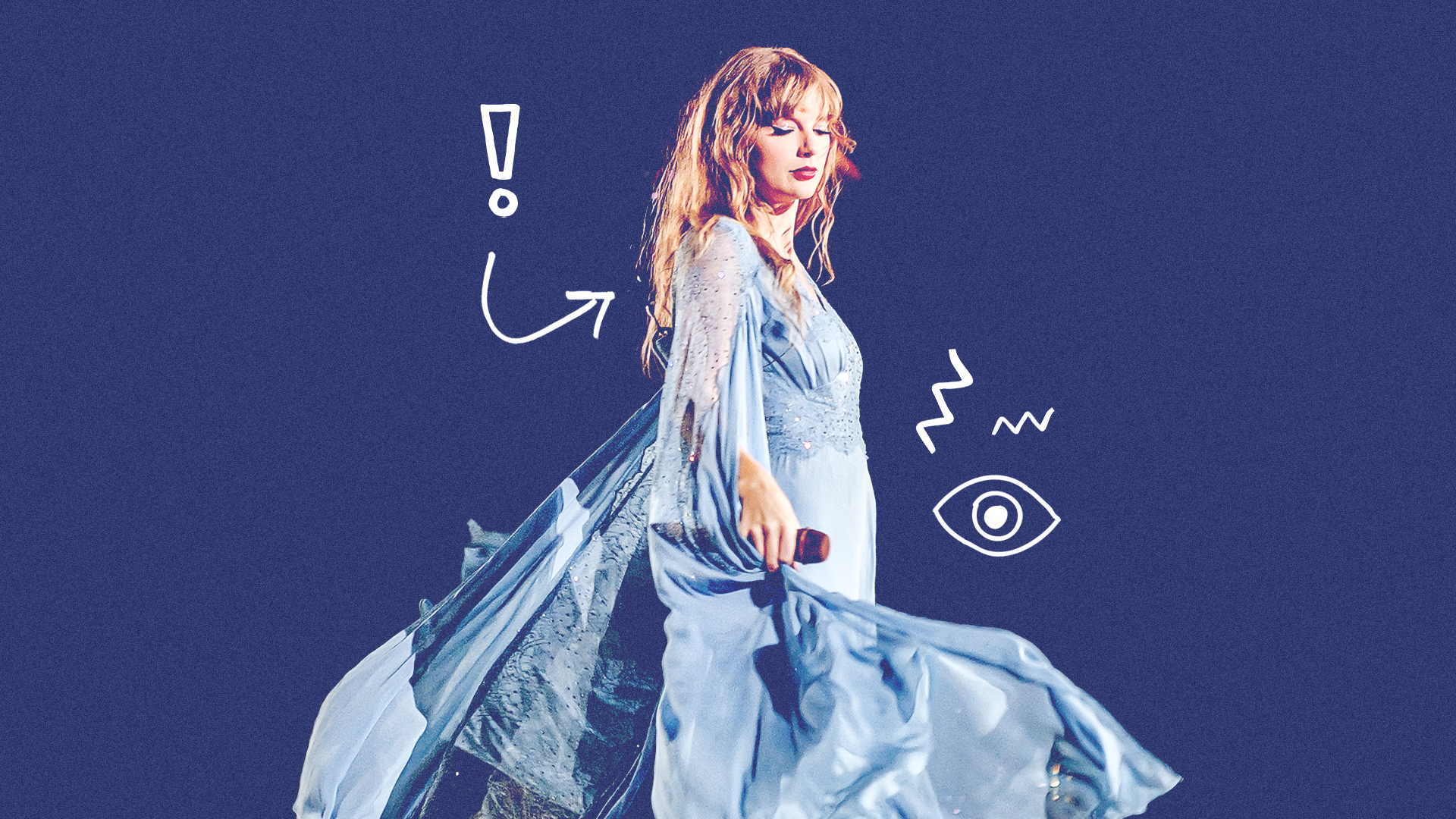

Last week, explicit images of Taylor Swift created using AI were shared across Twitter (X), with some posts gaining millions of views. The ensuing legal panic could have ramifications for the use of celebrity likeness, and AI images in general.

Taylor Swift was the victim of AI image generation last week. Explicit, pornographic images were created without her consent and shared across X (formally Twitter) by thousands of users.

The posts were viewed tens of millions of times before they were removed and scrubbed from the platform.

The ensuing fallout has been swift, with X tweaking its censorship filters over the weekend to remove any mention of the images. US politicians are calling for new laws to criminalise deepfakes as a direct result, and Microsoft has committed to pushing more guardrails on its Designer IP app in order to prevent future incidents.

Posting Non-Consensual Nudity (NCN) images is strictly prohibited on X and we have a zero-tolerance policy towards such content. Our teams are actively removing all identified images and taking appropriate actions against the accounts responsible for posting them. We’re closely…

— Safety (@Safety) January 26, 2024

These latest developments in deepfake controversy follow many years of unethical pornographic content online, most of which strip victims of autonomy over their likeness. It’s a problem that concerns both celebrities and ordinary people alike, as AI tools become more commonplace and accessible for anyone and everyone to use.

Taylor’s high-profile status and devoted fanbase has helped push this issue to the forefront of current news, and will no doubt alert policy makers, social media platforms, and tech companies in a way that we haven’t seen up until now.

While change and stricter laws should have been put into place a long while ago, we’re likely to see much-needed progress in the coming weeks and months. Ramifications could be wide-reaching and affect AI image generation in general – not just celebrity likenesses or explicit content.

How could the legal fallout affect AI image generation in the future?

So, what specifically is happening legally to combat Taylor’s deepfake AI content?

On Tuesday, a bipartisan group of US senators introduced a bill that would criminalise the spread of nonconsensual, sexualised images generated by AI. This would allow victims to seek a civil penalty against ‘individuals who produced or possessed the forgery with intent to distribute it’.

In addition, anyone receiving images or material knowing they were not created with consent would also be affected.

Dick Durbin, US Senate majority whip, and senators Lindsey Graham, Amy Klobuchar, and Josh Hawley are behind the bill. It is being called the ‘Disrupt Explicit Forged Images and Non-Consensual Edits Act of 2024’, or ‘Defiance Act’ for short.

Taylor’s explicit photos also reached the White House. Press Secretary Karine Jean-Pierre told ABC News on Friday that the government were ‘alarmed by reports of circulating images’.

All this follows on from another bill called the No AI FRAUD Act which was introduced on January 10th, 2024.

This aims to create a ‘federal, baseline protection against AI abuse’, and uphold First Amendment rights online. It places particular emphasis on an individual’s rights to their likeness and voice against AI forgeries. If passed, the No AI FRAUD Act would ‘reaffirm that everyone’s likeness and voice is protected and give individuals the right to control the use of their identifying characteristics’.

Of course, legal protection is one thing, actually enforcing it on a net-wide basis is another. There is also the contentious issue of free speech and expression – and determining where punishment lies.

Should mediating software platforms that make AI images possible be restricted or punished? We don’t limit Photoshop, for example, despite it being a prominent tool to create misleading images. Where should AI companies stand legally here? It’s currently unclear.

Lawmakers propose anti-nonconsensual AI porn bill after Taylor Swift controversy https://t.co/3PS8h0ULxO

— The Verge (@verge) January 31, 2024